Software Development with Application Containers

Application containers are tools that allow software to run in an isolated environment, using only the resources the application requires, as if it were running on its own dedicated server.

A single operating system can run multiple application containers at once because they:

- Are lightweight

- Launch quickly

- Have a consistent runtime model

- Are portable

- Use cutting edge technology to package

- Run a single service in a variety of environments

"Application containers have the potential to simplify the software development process."

The biggest advantage is consistency across multiple environments. Getting consistency from all developers, through testing and all the way to production ensures everybody is looking at the application the same way.

Software rarely operates under identical conditions from one user to the next, or from one system to the next. Even seemingly subtle hardware or supporting software differences that exist between the development and testing environments, or between testing and production, can cause headaches.

Application containers help level the playing field by creating a complete environment with everything needed to launch an application isolated in one package, which makes many infrastructure differences moot issues.

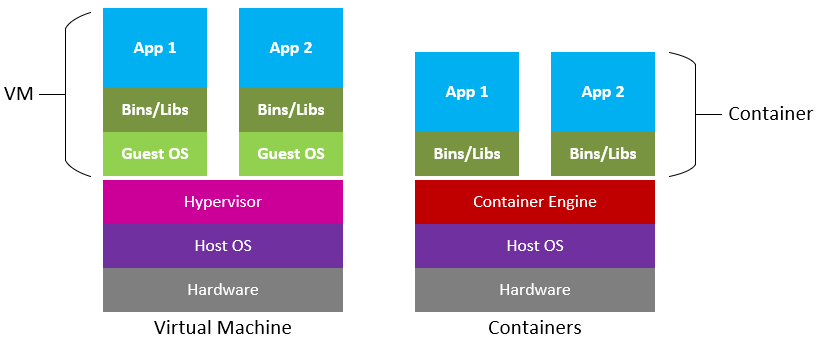

How Application Containers Work

The concept is based on tool sets that have been around a long time but have been refined in recent years. Docker, which was released as open source in 2013, is the prominent player. Other tools which can aid in development and deployment, but approach the problems from different angles, include Vagrant, Chef and Puppet.

The key technology in Docker is a union file system. This is a layered approach that features:

- Transparent overlaying of independent file system s

- Merges read-only and read-write file systems

- Sets write priorities

Because Docker is very lightweight, several application containers can use the same operating system kernel. But each container runs in isolation. Whatever is layered inside the container cannot see any resources or processes that are on the outside.

Application containers are easy to configure. Once the baseline is set, developers can share an application container to make sure they are running code the same way. In the case of running two different projects that share some base technologies, Docker builds images by trying to use elements that have been used in the past, rather than building from scratch each time.

Potential for the Future

Docker technology is being embraced in cloud environments by Microsoft, Amazon, and Google. That acceptance permits applications to be widely deployed without any changes being made. The technology is designed to run the application in each environment based on the Docker file instructions.

To Recap

Application containers were once Linux centric. However, Docker containers are now available on Mac OS X and Microsoft Windows and run natively on all three major platforms. This adds to the flexibility for software developers.

- Developers using Windows, Mac or Linux can all use the same tool

- Development is streamlined by creating a consistent environment across all platforms

- An image can quickly be built and run in all environments